But now the problem is how we can concatenate these three input columns. So, the solution to this problem is Expressions i.e. we can write a following expression (Expression starts with equal (=) sign):- =First_name+” ”+Middle_name+” ”+Last_name

Let us make this question clearer (Question modified a little bit for more understanding) –

While moving the input data into a table, user wants a new column (i.e. Gender_name) by referencing to the column “GenderID” which means –

Before we begin our in depth study on 2 above scenarios, firstly let’s create a table.

This is all about the two scenarios. Now, I am moving ahead and will execute my SSIS Derived Column Transformation package. Let’s see what will happen now.

Lookup Transformation in SSIS:

a SQL Server Integration Services (SSIS) package that you want to perform a lookup in order to supplement or validate the data in your data flow. A lookup lets you access data related to your current dataset without having to create a special structure to support that access.

To facilitate the ability to perform lookups, SSIS includes the Lookup transformation, which provides the mechanism necessary to access and retrieve data from a secondary dataset. The transformation works by joining the primary dataset (the input data) to the secondary dataset (the referenced data). SSIS attempts to perform an equi-join based on one or more matching columns in the input and referenced datasets, just like you would join two tables in in a SQL Server database.

Because SSIS uses an equi-join, each row of the input dataset must match at least one row in the referenced dataset. The rows are considered matching if the values in the joined columns are equal. By default, if an input row cannot be joined to a referenced row, the Lookup transformation treats the row as an error. However, you can override the default behavior by configuring the transformation to instead redirect any rows without a match to a specific output. If an input row matches multiple rows in the referenced dataset, the transformation uses only the first row. The way in which the other rows are treated depends on how the transformation is configured.

The Lookup transformation lets you access a referenced dataset either through an OLE DB connection manager or through a Cache connection manager. The Cache connection manager accesses the dataset held in an in-memory cache store throughout the duration of the package execution. You can also persist the cache to a cache file (.caw) so it can be available to multiple packages or be deployed to several computers.

The best way to understand how the Lookup transformation works is to see it in action. In this article, we’ll work through an example that retrieves employee data from the AdventureWorks2008R2 sample database and loads it into two comma-delimited text files. The database is located on a local instance of SQL Server 2008 R2. The referenced dataset that will be used by the Lookup transformation is also based on data from that database, but stored in a cache file at the onset of the package execution.

The first step, then, in getting this example underway is to set up a new SSIS package in Business Intelligence Development Studio (BIDS), add two Data Flow tasks to the control flow, and connect the precedence constraint from the first Data Flow task to the second Data Flow task, as I’ve done in Figure 1.

Figure 1: Adding data flow components to your SSIS package

Notice that I’ve named the fist Data Flow task Load data into cache and the second one Load data into file. These names should make it clear what purpose each task serves. The Data Flow tasks are also the only two control flow components we need to add to our package. Everything else is at the data flow level. So let’s get started.

Writing Data to a Cache

Because we’re creating a lookup based on cached data, our initial step is to configure the first data flow to retrieve the data we need from the AdventureWorks2008R2 database and save it to a cache file. Figure 2 shows what the data flow should look like after the data flow has been configured to cache the data. As you can see, you need to include only an OLE DB source and a Cache transformation.

Figure 2: Configuring the data flow that loads data into a cache

Before I configured the OLE DB source, I created an OLE DB connection manager to connect to theAdventureWorks2008R2 database on my local instance of SQL Server. I named the connection managerAdventureWorks2008R2.

I then configured the OLE DB source to connect to the AdventureWorks2008R2 connection manager and to use the following T-SQL statement to retrieve the data to be cached:

USE AdventureWorks2008R2;

GO

SELECT

BusinessEntityID,

NationalIDNumber

FROM

HumanResources.Employee

WHERE

BusinessEntityID < 250;

Notice that I’m retrieving a subset of data from the HumanResources.Employee table. The returned dataset includes two columns: BusinessEntityID and NationalIDNumber. We will use the BusinessEntityID column to match rows with the input dataset in order to return a NationalIDNumber value for each employee. Figure 3 shows what your OLE DB Source editor should look like after you’ve configured it with the connection manager and T-SQL statement.

Figure 3: Using an OLE DB source to retrieve data from the AdventureWorks2008R2 database

You can view a sample of the data that will be cached by clicking the Preview button in the OLE DB Source editor. This launches the Preview Query Results dialog box, shown in Figure 4, which will display up to 200 rows of your dataset. Notice that a NationalIDNumber value is associated with each BusinessEntityID value. The two values combined will provide the cached data necessary to create a lookup in your data flow.

Figure 4: Previewing the data to be saved to a cache

After I configured the OLE DB source, I moved on to the Cache transformation. As part of the process of setting up the transformation, I first configured a Cache connection manager. To do so, I opened the Cache Transformationeditor and clicked the New button next to the Cacheconnection manager drop-down list. This launched the Cache Connection Manager editor, shown in Figure 5.

Figure 5: Adding a Cache connection manager to your SSIS package

I named the Cache connection manager NationalIdCache, provided a description, and selected the Use File Cache checkbox so my cache would be saved to a file. This, of course, isn’t necessary for a simple example like this, but having the ability to save the cache to a file is an important feature of the SSIS lookup operation, so that’s why I’ve decided to demonstrate it here.

Next, I provided and path and file name for the .caw file, and then selected the Columns tab in the Cache Connection Manager editor, which is shown in Figure 6.

Figure 6: Configuring the column properties in your Cache connection manager

Because I created my Cache connection manager from within the Cache transformation, the column information was already configured on the Columns tab. However, I had to change the Index Position value for theBusinessEntityID column from 0 to 1. This column is an index column, which means it must be assigned a positive integer. If there are more than one index columns, those integers should be sequential, with the column having the most unique values being the lowest. In this case, there is only one index column, so I need only assign one value. The NationalIDNumber is a non-index column and as such should be configured with an IndexPosition value of 0, the default value.

When a Cache connection manager is used in conjunction with a Lookup transformation, as we’ll be doing later in this example, the index column (or columns) is the one that is mapped to the corresponding column in the input dataset. Only index columns in the referenced dataset can be mapped to columns in the input dataset.

After I set up the Cache connection manager, I configured the Cache transformation. First, I confirmed that the Cacheconnection manager I just created is the one specified in the Cache connection manager drop-down list on theConnection Manager page of the Cache Transformation editor, as shown in Figure 7.

Figure 7: Setting up the Cache transformation in your data flow

Next, I confirmed the column mappings on the Mappings page of the Cache Transformation editor. Given that I hadn’t changed any column names along with way, these mappings should have been done automatically and appear as they do in Figure 8.

Figure 8: Mapping columns in the Cache transformation

That’s all there is to configuring the first data flow to cache the referenced data. I confirmed that everything was running properly by executing only this data flow and then confirming that the .caw file had been created in its designated folder. We can now move on to the second data flow.

Performing Lookups from Cached Data

The second data flow is the one in which we perform the actual lookup. We will once again retrieve employee data from the AdventureWorks2008R2 database, look up the national ID for each employee (and adding it to the data flow), and save the data to one of two files: the first for employees who have an associated national ID and the second file for those who don’t. Figure 9 shows you what your data flow should look like once you’ve added all the components.

Figure 9: Configuring the data flow to load data into text files

The first step I took in setting up this data flow was to add an OLE DB source and configure it to connect to theAdventureWorks2008R2 database via to the AdventureWorks2008R2 connection manager. I then specified that the source component run the following T-SQL statement in order to retrieve the necessary employee data:

SELECT

BusinessEntityID,

FirstName,

LastName,

JobTitleFROM

HumanResources.vEmployee;

The data returned by this statement represents the input dataset that will be used for our lookup operation. Notice that the dataset includes the BusinessEntityID column, which will be used to map this dataset to the referenced dataset. Figure 10 shows you what the Connection Manager page of the OLE DB Source editor should look like after you’ve configured that connection manager and query.

Figure 10: Configuring an OLE DB source to retrieve employee data

As you did with the OLE DB source in the first data flow, you can preview the data returned by the SELECTstatement by clicking the Preview button. Your results should look similar to those shown in Figure 11.

Figure 11: Previewing the employee data returned by the OLE DB source

My next step was to add a Lookup transformation to the data flow. The transformation will attempt to match the input data to the referenced data saved to cache. When you configure the transformation you can choose the cache mode and connection type. You have three options for configuring the cache mode:

- Full cache: The referenced dataset is generated and loaded into cache before the Lookup transformation is executed.

- Partial cache: The referenced dataset is generated when the Lookup transformation is executed, and the dataset is loaded into cache.

- No cache: The referenced dataset is generated when the Lookup transformation is executed, but no data is loaded into cache.

For this exercise, I selected the first option because I am generating and loading the data into cache before I run theLookup transformation. Figure 12 shows the General page of the Lookup Transformation editor, with the Full Cache option selected.

Figure 12: Configuring the General page of the Lookup Transformation editor

Notice that the editor also includes the Connection type section, which supports two options: Cache Connection Manager and OLE DB Connection Manager. In this case, I selected the Cache Connection Manager option because I will be retrieving data from a cache file, and this connection manager type is required to access the data in that file.

As you can see in Figure 12, you can also choose an option from the drop-down list Specify how to handle rows with no matching entries. This option determines how rows in the input dataset are treated if there are no matching rows in the referenced database. By default, the unmatched rows are treated as errors. However, I selected the Redirect rows to no match output option so I could better control the unmatched rows, as you’ll see in a bit.

After I configured the General page of the Lookup Transformation editor, I moved on to the Connection page and ensured that the Cache connection manager named NationalIdCache was selected in the Cache Connection Manager drop-down list. This is the same connection manager I used in the first data flow to save the dataset to a cache file. Figure 13 shows the Connection page of the Lookup Transformation editor with the specified Cache connection manager.

Figure 13: Configuring the Connection page of the Lookup Transformation editor

Next, I configured the Columns page of the Lookup Transformation editor, shown in Figure 14. I first mapped theBusinessEntityID input column to the BusinessEntityID lookup column by dragging the input column to the lookup column. This process created the black arrow between the tables that you see in the figure. As a result, theBusinessEntityID columns will be the ones used to form the join between the input and referenced datasets.

Figure 14: Configuring the Columns page of the Lookup Transformation editor

Next, I selected the checkbox next to the NationalIDNumber column in the lookup table to indicate that this was the column that contained the lookup values I wanted to add to the data flow. I then ensured that the lookup operation defined near the bottom of the Columns page indicated that a new column would be added as a result of the lookup operation. The Columns page of your Lookup Transformation editor should end up looking similar to Figure 14.

My next step was to add a Flat File destination to the data flow. When I connected the data path from the Lookuptransformation to the Flat File destination, the Input Output Selection dialog box appeared, as shown in Figure 15. The dialog box let’s you chose which data flow output to send to the flat file—the matched rows or the unmatched rows. In this case, I went with the default, Lookup Match Output, which refers to the matched rows.

Figure 15: Selecting an output for the Lookup transformation

Next, I opened the Flat File Destination editor and clicked the New button next to the Flat File ConnectionManager drop-down list. This launched the Flat File Connection Manager editor, shown in Figure 16. I typed the name MatchingRows in the Connection manager name text box, typed the file nameC:\DataFiles\MatchingRows.txt in the File name text box, and left all other setting with their default values.

Figure 16: Setting up a Flat File connection manager

After I saved my connection manager settings, I was returned to the Connection Manager page of the Flat File Destination editor. The MatchingRows connection manager was now displayed in the Flat File ConnectionManager drop-down list, as shown in Figure 17.

Figure 17: Configuring a Flat File destination in your data flow

I then selected the Mappings page (shown in Figure 18) to verify that the columns were properly mapped between the data flow and the file destination. One thing you’ll notice at this point is that the data flow now includes theNationalIDNumber column, which was added to the data flow by the Lookup transformation.

Figure 18: Verifying column mappings in your Flat File destination

The next step I took in configuring the data flow was to add a second Flat File destination and connect the second data path from the Lookup transformation to the new destination. I then configured a second connection manager with the name NonMatchingRows and the file name C:\DataFiles\NonMatchingRows.txt. All rows in the input data that do not match rows in the referenced data will be directed to this file. Refer back to Figure 9 to see what your data flow should look like at this point.

Running Your SSIS Package

The final step, of course, is to run the SSIS package in BIDS. When I ran the package, I watched the second data flow so I could monitor how many rows matched the lookup dataset and how many did not. Figure 19 shows the data flow right after I ran the package.

Figure 19: Running your SSIS package

In this case, 249 rows in the input dataset matched rows in the referenced dataset, and 41 rows did not. These are the numbers I would have expected based on my source data. I also confirmed that both text files had been created and that they contained the expected data.

As you can see, the

Lookup transformation makes it relatively easy to access referenced data in order to supplement the data flow. For this example, I retrieved my referenced data from the same database as I retrieved the input data. However, that referenced data could have come from any source whose data could be saved to cache, or it could have come directly from another database through an OLE DB connection. Once you understand the principles behind the

Lookup transformation, you can apply them to your particular situation in order to create an effective lookup operation.

Cache Connection Manager

- This connection manager is to define the cache

- Cache will be stored in a memory, however we can create cache file to hold the data.

- Once we feed the data into that file (*.caw) , later in all data flow tasks, we can use connection manager and get the data from that cache file

Cache Transformation

- This is one of the Data flow transformation

- It uses memory cache / file cache as configured in Cache Connection Manager and pulls data from there

- This is a very helpful transformation in terms of performance improvement

Lookup Transformation

- Basically it is being used to lookup the data

- It is using equi-join.

- All the values from source table (joining column) SHOULD exist in Reference table. If not, it will throw an

error

- NULL value also will be considered as non-matching row

- This is CASE-SENSITIVE.

- It can internally use Cache Connection / OLEDB Connection.

- There can be 3 cache types

(A) Full Cache

- Cache connection manager can be used only with this type of cache

- In this case, when package starts, data will be pulled and kept into memory cache / file cache

and later only cache will be used and not database

- We might not get the latest data from database

(B) Partial Cache

- Cache connection manager can't be used with this

- "Advance" tab of Lookup transformation will be enabled only in case of Partial cache where one

can configure cache size

- This is bit different than Full cache

- Initially cache will be empty. For each value, first it checks in Cache, if not found then goes to

database, if found from database, then stores that value in cache so it can be used in later stage.

- Startup time will be less than Full cache but processing time will be longer

- Also lot of available memory should be there.

(C) No Cache

- Every time, it will get it from database

- There are 3 outputs

(A) Matching Row

(B) No Matching Row

(C) Error

EXAMPLE

Pre-requisite

Execute the following query in database

CREATE TABLE LookupDemo (EID int, EName varchar(10), CountryVARCHAR(10))

CREATE TABLE LookupDemoReference (Country VARCHAR(10), RegionVARCHAR(15))

CREATE TABLE LookupDemoOutput (EID int, EName varchar(10), CountryVARCHAR(10), Region VARCHAR(15))

INSERT INTO LookupDemo (EID,EName,Country) VALUES

(1,'Nisarg','India'),

(2,'Megha','INDIA'),

(3,'Swara','China'),

(4,'Nidhi','USA'),

(5,'Lalu','Japan')

INSERT INTO LookupDemoReference (Country, Region) VALUES

('India','ASIA'),

('India','ASIA-Pacific'),

('China','ASIA-Pacific'),

('USA','North America'),

('Japan','ASIA-Pacific')

Steps

1. Right click in Connection Tray and click on "New Connection"

2. select "CACHE" and click on Add

3. Check "Use File Cache" checkbox to store the cache data in a file.

4. select the file destination and give some name

5. Click on "columns" tab and start adding columns.

6. Add 2 columns (Country , Region) with IndexPosition 1 and 0 respectively.

Index Position 1 : this will be a joining key on which we will use joining conditions

Index Position 0 : Other columns

7. Drag data flow task and rename as "Build Cache"

8. Go inside DFT and drag OLEDB source which should refer to LookupDemoReference table.

9. Drag Cache Transformation and connect it with source

10. In Connection Manager, select created cache connection manager

11. click on mapping and make sure, you have proper mapping between columns.

12. Go back to control flow and drag one more data flow task and rename it to "Lookup Data"

13. Connect 1st DFT to 2nd DFT. It should look like this.

14. Go to Lookup Data and add OLEDB source which should point to LookupDemo table

15. Drag Lookup Transformation and connect it with source

16. Select "Full Cache" in General tab of Lookup Transformation. Select "Cache Connection"

17. Select created connection manager and in Connection tab

18. Do column mappings as shown below.

19. Drag OLEDB destination and connect it with Lookup Transformation. make sure to choose "Lookup Match output"

20. OLEDB destination should point to LookupDemoOutput table

20. Column mapping should be like this.

21. Now execute the package.

22. Package will be failed at lookup transformation

23. Reason of failure.

- Source table has one record with INDIA which is not available in Reference table, this signifies that

Lookup transformation is CASE-SENSITIVE

- Lookup Transformation is different then

Merge Join here. Merge join will ignore the rows if they are not

matching, but Lookup will throw an error.

24a. Open Lookup Transformation and change settings like this.

24b. Now drag

Derived Column Transformation and connect it with Lookup Transformation. Make sure Lookup No Match Output gets displayed on the arrow.

25. In Derived Column, add a new column like this. Make sure to cast it into DT_STR

26. Remove the connection between OLEDB Destination and Lookup Transformation.

26. Now drag "

Union All Transformation" and connect it with Lookup Transformation. Make sure Lookup Match Output gets displayed on the arrow.

27. Select "Invalid Column" in last row, last column

28. Connect Union All transformation to OLEDB destination

29. Whole DFT will look like this.

30. Execute the package.

31. We can see that 1 rows has been redirected as "No match output" and finally using Union All it is reaching to destination.

32. Result table is looking like this.

33. This signifies following things.

- Lookup Transformation is Case-sensitive

- If 2 reference records are existing then Lookup Trans. picks up only 1st record.

- Lookup Trans. fails if joining key column is not in reference table in case we haven't configure No

Match output.

Merge Transformation in SSIS

In this article, we’ll look at another type of component: the Merge Join transformation. The Merge Jointransformation lets us join data from more than one data source, such as relational databases or text files, into a single data flow that can then be inserted into a destination such as a SQL Server database table, Excel spreadsheet, text file, or other destination type. The Merge Join transformation is similar to performing a join in a Transact-SQL statement. However, by using SSIS, you can pull data from different source types. In addition, much of the work is performed in-memory, which can benefit performance under certain condition.

In this article, I’ll show you how to use the Merge Join transformation to join two tables from two databases into one data flow whose destination is a single table. Note, however, that although I retrieve data from the databases on a single instance of SQL Server, it’s certainly possible to retrieve data from different servers; simply adjust your connection settings as appropriate.

You can also use the Merge Join transformation to join data that you retrieve from Excel spreadsheets, text or comma-separated values (CSV) files, database tables, or other sources. However, each source that you join must include one or more columns that link the data in that source to the other source. For example, you might want to return product information from one source and manufacturer information from another source. To join this data, the product data will likely include an identifier, such as a manufacturer ID, that can be linked to a similar identifier in the manufacturer data, comparable to the way a foreign key relationship works between two tables. In this way, associated with each product is a manufacturer ID that maps to a manufacturer ID in the manufacturer data. Again, the Merge Join transformation is similar to performing a join in T-SQL, so keep that in mind when trying to understand the transformation.

Preparing the Source Data for the Data Flow

Before we actually set up our SSIS package, we should ensure we have the source data we need for our data flow operation. To that end, we need to two databases: Demo and Dummy. Of course, you do not need to use the same data structure that we’ll be using for this exercise, but if you want to follow the exercise exactly as described, you should first prepare your source data.

To help with this demo, I created the databases on my local server. I then copied data from theAdventureWorks2008 database into those databases. Listing 1 shows the T-SQL script I used to create the databases and their tables, as well as populate those tables with data.

USE master;

GO

IF DB_ID('Demo') IS NOT NULL

DROP DATABASE Demo;

GO

CREATE DATABASE Demo;

GO

IF DB_ID('Dummy') IS NOT NULL

DROP DATABASE Dummy;

GO

CREATE DATABASE Dummy;

GO

IF OBJECT_ID('Demo.dbo.Customer') IS NOT NULL

DROP TABLE Demo.dbo.Customer;

GO

SELECT TOP 500

CustomerID,

StoreID,

AccountNumber,

TerritoryID

INTO Demo.dbo.Customer

FROM AdventureWorks2008.Sales.Customer;

IF OBJECT_ID('Dummy.dbo.Territory') IS NOT NULL

DROP TABLE Dummy.dbo.Territory;

GO

SELECT

TerritoryID,

Name AS TerritoryName,

CountryRegionCode AS CountryRegion,

[Group] AS SalesGroup

INTO Dummy.dbo.Territory

FROM AdventureWorks2008.Sales.SalesTerritory;

Listing 1: Creating the Demo and Dummy databases

As Listing 1 shows, I use a SELECT…INTO statement to create and populate the Customer table in the Demo database, using data from the Customer table in the AdventureWorks2008 database. I then use a SELECT…INTO statement to create and populate the Territory table in the Dummy database, using data from the SalesTerritory table in theAdventureWorks2008 database.

Creating Our Connection Managers

Once we’ve set up our source data, we can move on to the SSIS package itself. Our first step, then, is to create an SSIS package. Once we’ve done that, we can add two OLE DB connection managers, one to the Demo database and one to the Dummy database.

To create the first connection manager to the Demo database, right-click the Connection Manager window, and then click New OLE DB Connection, as shown in Figure 1.

Figure 1: Creating an OLE DB connection manager

When the Configure OLE DB Connection Manager dialog box appears, click New. This launches the Connection Manager dialog box, where you can configure the various options with the necessary server and database details, as shown in Figure 2. (For this exercise, I created the Demo and Dummy databases on the ZOO-PC\CAMELOT SQL Server instance.)

Figure 2: Configuring a new connection manager

After you’ve set up your connection manager, ensure that you’ve configured it correctly by clicking the Test Connection button. You should receive a message indicating that you have a successful connection. If not, check your settings.

Assuming you have a successful connection, click OK to close the Connection Manager dialog box. You’ll be returned to the Configure OLE DB Connection Manager dialog box. Your newly created connection should now be listed in the Data connections window.

Now create a connection manager for the Dummy database, following the same process that you used for the Demodatabase.

The next step, after adding the connection managers to our SSIS package, is to add a Data Flow task to the control flow. As you’ve seen in previous articles, the Data Flow task provides the structure necessary to add our components (sources, transformations, and destinations) to the data flow.

To add the Data Flow task to the control flow, drag the task from the Control Flow Items section of the Toolbox to the control flow design surface, as shown in Figure 3.

Figure 3: Adding a Data Flow task to the control flow

The Data Flow task serves as a container for other components. To access that container, double-click the task. This takes you to the design surface of the Data Flow tab. Here we can add our data flow components, starting with the data sources.

Adding Data Sources to the Data Flow

Because we’re retrieving our test data from two SQL Server databases, we need to add two OLE DB Sourcecomponents to our data flow. First, we’ll add a source component for the Customer table in the Demo database. Drag the component from the Data Flow Source s section of the Toolbox to the data flow design surface.

Next, we need to configure the OLE DB Source component. To do so, double-click the component to open the OLE DB Source Editor, which by default, opens to the Connection Manager page.

We first need to select one of the connection managers we created earlier. From the OLE DB connection managerdrop-down list, select the connection manager you created to the Demo database. On my system, the name of the connection manager is ZOO-PC\CAMELOT.Demo. Next, select the dbo.Customer table from the Name of the table or the view drop-down list. The OLE DB Source Editor should now look similar to the one shown in Figure 4.

Figure 4: Configuring the OLE DB Source Editor

Now we need to select the columns we want to retrieve. Go to the Columns page and verify that all the columns are selected, as shown in Figure 5. These are the columns that will be included in the component’s output data flow. Note, however, if there are columns you don’t want to include, you should de-select those columns from theAvailable External Columns list. Only selected columns are displayed in the External Column list in the bottom grid. For this exercise, we’re using all the columns, so they should all be displayed.

Figure 5: Selecting columns in the OLE DB Source Editor

Once you’ve verified that the correct columns have been selected, click OK to close the OLE DB Source Editor.

Now we must add an OLE DB Source component for the Territory table in the Dummy database. To add the component, repeat the process we’ve just walked through, only make sure you point to the correct database and table.

After we’ve added our source components, we can rename them to make it clear which one is which. In this case, I’ve renamed the first one Demo and the second one Dummy, as shown in Figure 6.

Figure 6: Setting up the OLE DB source components in your data flow

To rename the OLE DB Source component, right-click the component, click Rename, and then type the new name directly in the component.

Adding the Merge Join Transformation to the Data Flow

Now that we have the data sources, we can add the Merge Join transformation by dragging it from the Data Flow Transformations section of the Toolbox to the data flow design surface, beneath the source components, as shown in Figure 7.

Figure 7: Adding the Merge Join transformation to the data flow

We now need to connect the data flow paths from the Demo and Dummy source components to the Merge Jointransformation. First, drag the data path (green arrow) from the Demo source component to the Merge Jointransformation. When you attach the arrow to the transformation, the Input Output Selection dialog box appears, displaying two options: the Output drop-down list and the Input drop-down list. The Output drop-down list defaults to OLE DB Source Output, which is what we want. From the Input drop-down list, select Merge Join Left Input, as shown in Figure 8. We’ll use the other option, Merge Join Right Input, for the Dummy connection.

Figure 8: Configuring the Input Output Selection dialog box

Next, connect the data path from the Dummy data source to the Merge Join transformation. This time, the Input Output Selection dialog box does not appear. Instead, the Input drop-down list defaults to the only remaining option: Merge Join Right Input. Your data flow should now resemble the one shown in Figure 9.

Figure 9: Connecting the source components to the Merge Join transformation

You may have noticed that a red circle with a white X is displayed on the Merge Join transformation, indicating that there is an error. If we were to run the package as it currently stands, we would receive the error message shown in Figure 10.

Figure 10: Receiving a package validation error on the Merge Join transformation

The reason for the error message is that the data being joined by a Merge Join transformation must first be sorted. There are two ways of achieving this: by sorting the data through the OLE DB S ource component or by adding aSort transformation to the data flow.

Sorting Data Through the OLE DB Source

To sort the data through the OLE DB source component, you must first modify the connection to use a query, rather than specifying a table name. Double-click the Demo source component to open the OLE DB Source Editor. From the Data access mode drop-down list, select SQL command. Then, in the SQL command text window, type the following SELECT statement:

SELECT CustomerID, StoreID, AccountNumber, TerritoryID

FROM dbo.Customer

ORDER BY TerritoryID

The Connection Manager page of the OLE DB Source Editor should now look similar to the one shown in Figure 11.

Figure 11: Defining a SELECT statement to retrieve data from the Customer table

Once you’ve set up your query, click OK to close the OLE DB Source Editor. You must then use the advanced editor of the OLE DB Source component to sort specific columns. To access the editor, right-click the component, and then click Show Advanced Editor.

When the Advanced Editor dialog box appears, go to the Input and Output Properties tab. In the Inputs and Outputs window, select the OLE DB Source Output node. This will display the Common Properties window on the right-hand side. In that window, set the IsSorted option to True, as shown in Figure 12.

Figure 12: Configuring the IsSorted property on the Demo data source

We have told the package that the source data will be sorted, but we must now specify the column or columns on which that sort is based. To do this, expand the Output Columns subnode (under the OLE DB Source Outputnode), and then select TerritoryID column. The Common Properties window should now display the properties for that column. Change the value assigned to the SortKeyPosition property from 0 to a 1, as shown in Figure 13. The setting tells the other components in the data flow that the data is sorted based on the TerritoryID column. This setting must be consistent with how you’ve sorted your data in your query. If you want, you can add additional columns on which to base your sort, but for this exercise, the TerritoryID column is all we need.

Figure 13: Configuring the SortKeyPosition property on the Territory ID column

Once you’ve configured sorting on the Demo data source, click OK to close the Advanced Editor dialog box.

Adding a Sort Transformation to the Data Flow

Another option for sorting data in the data flow is to use the Sort transformation. When working with an OLE DB Source component, you usually want to use the source component’s T-SQL query and advanced editor to sort the data. However, for other data sources, such as a text file, you won’t have this option. And that’s where the Sorttransformation comes in.

Delete the data path that connects the Dummy data source to the Merge Join transformation by right-clicking the data path and then clicking Delete.

Next, drag the Sort transformation from the Data Flow Transformations section of the Toolbox to the data flow design surface, between the Dummy data source and the Merge Join transformation, as shown in Figure 14.

Figure 14: Adding the Sort transformation to the data flow

Drag the data path from the Dummy data source to the Sort transformation. Then double-click the Sorttransformation to open the Sort Transformation Editor, shown in Figure 15.

Figure 15: Configuring the Sort transformation

As you can see in the figure, at the bottom of the Sort Transformation Editor there is a warning message indicating that we need to select at least one column for sorting. Select the checkbox to the left of the TerritoryIDcolumn in the Available Input Columns list. This adds the column to the bottom grid, which means the data will be sorted based on that column. By default, all other columns are treated as pass-through, which means that they’ll be passed down the data flow in their current state.

When we select a column to be sorted, the sort order and sort type are automatically populated. These can obviously be changed, but for our purposes they’re fine. You can also rename columns in the Output Aliascolumn by overwriting what’s in this column.

Once you’ve configured the sort order, click OK to close the Sort Transformation Editor.

We now need to connect the Sort transformation to the Merge Join transformation, so drag the data path from theSort transformation to the Merge Join transformation, as shown in Figure 16.

Figure 16: Connecting the Sort transformation to the Merge Join transformation

That’s all we need to do to sort the data. For the customer data, we used the Demo source component. For the territory data, we used a Sort transformation. As far as the Merge Join transformation is concerned, either approach is fine, although, as mentioned earlier, if you’re working with an OLE DB Source component, using that component is usually the preferred method.

Configuring the Merge Join Transformation

Now that we have our source data sorted and the data sources connected to the Merge Join transformation, we must now configure a few more settings of the Merge Join transformation.

Double-click the transformation to launch the Merge Join Transformation Editor, as shown in Figure 17.

Figure 17: The default settings of the Merge Join Transformation Editor

Notice that your first setting in the Merge Join Transformation Editor is the Join type drop-down list. From this list, you can select one of the following three join types:

Left outer join: Includes all rows from the left table, but only matching rows from the right table. You can use the Swap Inputs option to switch data source, effectively creating a right outer join.Full outer join: Includes all rows from both tables.Inner join: Includes rows only when the data matches between the two tables.

For our example, we want to include all rows from left table (Customer) but only rows from the right table (Territory) if there’s a match, so we’ll use the Left outer join option.

The next section in the Merge Join Transformation Editor contains the Demo grid and Sort Grid. The Demo grid displays the table from the Demo data source. The Sort grid displays the table from the Dummy data source. However, because the Sort transformation is used, the Sort transformation is considered the source of the data. Had we changed the output column names in the Sort transformation, those names would be used instead of the original ones.

Notice that an arrow connects the TerritoryID column in the Demo grid to that column in the Sort grid. SSIS automatically matches columns based on how the data has been sorted in the data flow. In this case, our sorts are based on the TerritoryID column in both data sources, so those columns are matched and serve as the basis of our join.

You now need to select which columns you want to include in the data set that will be outputted by the Merge Jointransformation. For this exercise, we’ll include all columns except the AccountNumber column in the Customer table and the TerritoryID column from the Territory table. To include a column in the final result set, simply select the check box next to the column name in either data source, as shown in Figure 18.

Figure 18: Specifying the columns to include in the merged result set

Notice that the columns you select are included in the lower windows. You can provide an output alias for each column if you want, but for this exercise, the default settings work fine.

Once you’ve configured the Merge Join transformation, click OK to close the dialog box. You’re now ready to add your data destination to the data flow.

Adding an OLE DB Destination to the Data Flow

Our final step in setting up the data flow is to add an OLE DB Destination component so that we can save our merged data to a new table. We’ll be using this component to add a table to the Demo database and populate the table with joined data. So drag the OLE DB Destination component from the Data Flow Destinations section of the Toolbox to the data flow design surface, beneath the Merge Join transformation, as shown in Figure 19.

Figure 19: Adding an OLE DB Destination component to the data flow

We now need to connect the OLE DB Destination component to the Merge Join transformation by dragging the data path from the transformation to the destination. Next, double-click the destination to open the OLE DB Destination Editor.

On the Connection Manager page of the OLE DB Destination Editor, specify connection manager for the Demodatabase. Then, from the Data access mode drop-down list, select Table or view - fast load, if it’s not already selected.

Next, we’re going to create a target table to hold our result set. Click the New button next to the Name of the table or the view option and the Create Table dialog box opens. In it a table definition is automatically generated that reflects the columns passed down the data flow. You can rename the table (or any other element) by modifying the table definition. On my system, I renamed the table Me rge_Output, as shown in Figure 20, once you are happy with the table definition click on OK.

Figure 20: Using an OLE DB Destination component to create a table

Click on OK to close the Create Table dialog box. When you’re returned to the OLE DB Destination Editor, go to the Mappings page and ensure that your columns are all properly mapped. (Your results should include all columns passed down the pipeline, which should be the default settings.) Click OK to close the OLE DB Destination Editor. We have now set up a destination so all that’s left to do is to run it and see what we end up with.

Running Your SSIS Package

Your SSIS package should now be complete. The next step is to run it to make sure everything is working as you expect. To run the package, click the Start Debugging button (the green arrow) on the menu bar.

If you ran the script I provided to populate your tables, you should retrieve 500 rows from the Demo data source and 10 from the Dummy data source. Once the data is joined, you should end up with 500 rows in the Merge_Outputtable. Figure 21 shows the data flow after successfully running.

Figure 21: Running the SSIS package to verify the results

One other check we can do is to look at the data that has been inserted into the Merge_Output table. In SQL Server Management Studio (SSMS), run a SELECT statement that retrieves all rows from the Merge_Output table in theDemo database. The first dozen rows of your results should resemble Figure 22.

Figure 22: Partial results from retrieving the data in the Merge_Output table

Merge Transformation in SSIS:

In this post we are gonna discuss about MERGE transformation. MERGE in SSIS is equal to UNION ALL in SQL Server. This transformation unions two datasets/tables.The merge Transformation combines two sorted dataset into single dataset. Highlighted the text SORTED in last statement because “It is not possible to use MERGE when the inputs are NOT SORTED”. There is one more transformation which is very similar to this i.e UNION ALL. The Merge Transformation is similar to the union all transformations. Use the union all transformation instead of the merge transformation in the following situations.

- The transformation inputs are not sorted

- The combined output does not need to be sorted.

- The transformation has more than two inputs.

MERGE takes ONLY TWO inputs where as UNION ALL can take more than two. Now lets see how to configure MERGE transformation with an example.

I created TWO tables with names MergeA and MergeB and inserted few records into each table as shown below.

- Open a new project and drag a Data Flow task from toolbox in Control Flow.

- Edit the Data Flow task by double clicking the object or by selecting EDIT button on Right click on the object. Make sure the Data Flow Page is opened as shown below.

- Select TWO OLE DB data sources from data flow sources and drag and drop it in the data flow.

- Double click on the OLE DB data source to open a new window where we can set the properties of the connection.

- Select the connection manager and click on new button to set the connection string to the TWO tables as shown below.

- Set the connecteions to the TWO tables created using TWO OLE DB data sources.

- As said earlier, we need to SORT the data before giving as input to the MERGE transformation and hence drag and drop TWO SORT transformations into Data Flow pane and provide output of each OLE DB source to each Sort transformation as shown below.

- Open the SORT configuration and select the Checkbox as EmpNo which means the SORT will happen on EmoNo column a s shown below and apply the same in both the SORT transformations. Also provide sort type as either Ascending or Descending as per your requirement.

- The Input data from both the sources is SORTED now and hence add MERGE transformation to the pane and provide OUTPUT of both sort transformations as input to MERGE transformation as shown below.

- Now drag and drop Flat File Destination to see the output on file, make connection between the Merge and the Flat File Destination as shown above.

- Double click on the Flat File Destination to configure, In CONNECTION MANAGER select the NEW Option to set file path of the file and Click OK. If you select a file which already Exists then it will take that file else a NEW file will be created.

- Check the mappings between Available Input Columns and Available Destination Columns and click OK.

- Now the Package is ready which pulls the data from TWO tables then sorts and then mergers the data that is coming from two sources before copying to destination file. Trigger the packages and make sure all turns to GREEN.

- Now open the file and see the data copied into the file which is coming from TWO sources.

In the above example I have taken OLE DB sources and Flat file destination to explain MERGE transformation. You can use any SOURCE and DESTINATION types depending on your requirements. The key things to keep in mind while using MERGE transformation -

- Same number of columns should be there in both the sources.

- Same data types should be present for mapping columns.

- Data should be sorted before giving as input to MERGE.

Import Column Transformation in SSIS:

The Import Column transformation reads data from files and adds the data to columns in a data flow. Using this transformation, a package can add text and images stored in separate files to a data flow. For example, a data flow that loads data into a table that stores product information can include the Import Column transformation to import customer reviews of each product from files and add the reviews to the data flow.

A column in the transformation input contains the names of files that hold the data. Each row in the dataset can specify a different file. When the Import Column transformation processes a row, it reads the file name, opens the corresponding file in the file system, and loads the file content into an output column. The data type of the output column must be DT_TEXT, DT_NTEXT, or DT_IMAGE.

Now let start step by step, Here I am moving a text file from a flat file source to a table in the database.

For the importing a text file into a database, we must have a table that hold the text file value and the path of the files. Let’s create a table named as Text_test.

Here I am having some text file and file called path, where it has all the files path.

Let start the BIDS and select a data flow task .

Click on the data flow task and then drag a Flat file source .

Now configure the Flat file source and connect to the file that has all the files path as I connected to path.txt which have all the files path.

You can see the data present in the file in Column tab.

Some times you might get Truncation Error on Filepath or input columns Be Aware of it. By default it lengths are 50. So kindly go for Advance tab and increase its length to maximum like 300.

Now take the Import Column and connect it to the Flat file source.

Configure the Import Column Transform

In the first page I mean Component Properties Tab you don’t need to do anything ,select Input Column tab where you will see source available columns check the filepath column.

Now select Input and Output Properties Tab where you will see these three option.

Import Column Input is already set to the selected source columns input.

Select Import Column Output and where add an output column by clicking Add Column.

I created an output column with the name of TextFileColumn.

We have to do one important step here we have to put this LineageID 29 highlighted in above screenshot. Into the Import column Input’s Filepath. Now press ok .

Now let’s configure the OLE DB destination and set the connection to the database where you created a table called Text_test.

Now mapping tab map the import Column output to the table Text_test Column and press “OK”.

Now execute the task.

The task executed successfully. Let’s check the output table in SSMS.

Here you can see all the files are saved to the Text files Column.

Difference between Merge and Merge join Transformations?

Difference and

similarity between merge and merge join transformation?

Sno

|

Merge Transformation

|

Merge Join

Transformation

|

1

|

The data from 2 input paths

are merged into one

|

The data from 2 inputs are

merged based on some

common Key

|

2

|

Works as UNION

|

JOIN (LEFT, RIGHT OR FULL)

|

3

|

Supports 2

Datasets

|

1 Dataset

|

4

|

Metadata for all columns

needs to be same

|

Key columns metadata needs to be same.

|

5

|

Data must be sorted

|

Data must be sorted

|

6

|

Does not support error

handling

|

Does not support error

handling

|

Difference between Merge join and Lookup Transformation in SSIS

Difference between Merge and Union all transformation

a) Merge transformation can accept only two inputs whereas Union all can take more than two inputs

b) Data has to be sorted before Merge Transformation whereas Union all doesn't have any condition like that.

MERGE JOIN Transformation in SSIS:

SSIS - How to Perform Union Operation in SSIS Package

Scenario:

We have two text files and we want to perform Union operation ( only get distinct records from both files) and load to our Destination. When we look in Data Flow Items, we don't see any Transformation that's name is Union. So how would we do this in SSIS?

Solution 1 ( Use Sort Transformation)

In this post we will first use Union All Transformation to union all records. Union All Transformation is going to return us all records, if they are present multiple times, Union All Transformation is going to return us multiple records. Then we will use Sort Transformation to eliminate duplicates and keep only one copy of them. Let's start with step by step approach

Step 1:

Create two text files as shown below. As you can see I have one record ( Aamir,Shahzad,XYZ Address) that is present in both files, rest of records are unique.

Fig 1: Text files for Union Operation in SSIS Package

Step 2:

Create new SSIS Package. Inside the SSIS Package, Bring the Data Flow Task to Control Flow Pane. Inside Data Flow Task, Bring Two Flat File Sources and create connection to TestFile1 and TestFile2.

Bring the Union All Transformation in Data Flow Pane and Connect the Both Flat File Source to it. As my column names in Testfile1 and TestFile2 are same, It will automatically map them. If your columns names are different , double click on Union All Transformation and map the columns from sources.

Fig 2: Union All Transformation in SSIS to merge Data from Two Sources

As Union All is going to return us all records , even duplicates. We want to get only distinct records as Union operation. Let's bring Sort Transformation and configure as shown below

Fig 3: Use Sort Transformation To Remove Duplicate Records

Now we can write these records to destination table or file. In my case just to show you, It worked, I am going to put Multicast Transformation and then add Data Viewer between Sort and Multicast Transformation to show you we performed Union Operation by using Union All and Sort Transformation together. If you want to learn more about Data Viewer, you can check

this post.

Fig 4: Performing Union Operation in SSIS Package by using Union All and Sort Together

As we can see in Fig 4, two records are read from each source. Union All Transformation returned us 4 records( Aamir,Shahzad,XYZ) as duplicate record. We used Sort Transformation to eliminate duplicates so we can get output Union would have return us. Sort removed the duplicate copies and returned us three records.

Solution 2 ( Use Aggregate Transformation)

We can use Aggregate Transformation with Union All Transformation to perform Union Operation in SSIS as well.

Instead of using Sort, let's put Aggregate Transformation after Union All Transformation and configure as shown below

Fig 5: Perform Union Operation in SSIS By using Aggregate Transformation

Let's run our SSIS Package and see if this package is performing the Union should.

Fig 6: Performing Union Operation By Using Union All and Aggregate Transformation Together

If you have small number of records and enough memory on machine where you are running the SSIS Package, this can be quick solution. If you have large number of records , These package can take long time to run. As Sort and Aggregate Transformation are blocking transformations. If you have large number of records, You might want to inserted them into database tables and then perform Union operation.

MultiCast Transformation in SSIS:

Multicast transformation is useful when ever you wish to make many copies of same data or you need to move the same data to different pipelines. It has one input and many outputs. I will replicate the same input what is takes and returns many copies of the same data. Lets take a simple example to demonstrate the same.

For this I am creating a table and inserting data into it. If you wish to use existing table then you can go ahead with that.

Note – I am creating 5 more tables with the same structure which I will use as Destination tables.

create table emp

(

emp_name varchar(100),

emp_id int,

sal money,

desig varchar(50),

joindate datetime,

enddate datetime

)

Inserting data into the same table.

insert into emp values(‘sita’,123,30000,’software engineer’,12-03-2011)

insert into emp values(‘ramu’,345,60000,’team lead’,15-06-2009)

insert into emp values(‘lakshman’,567,25000,’analyst’,18-02-2011)

insert into emp values(‘charan’,789,40000,’administrator’,27-05-2011)

insert into emp values(‘akhil’,234,30000,’software engineer’,24-07-2011)

insert into emp values(‘kaveri’,456,50000,’hr’,26-12-2009)

insert into emp values(‘nimra’,678,50000,’adminidtrator’,19-06-2010)

insert into emp values(‘lathika’,891,35000,’system analyst’,23-05-2010)

insert into emp values(‘yogi’,423,70000,’tech lead’,12-09-2009)

insert into emp values(‘agastya’,323,70000,’team lead’,23-04-2008)

insert into emp values(‘agastya’,235,50000,’hr’,21-12-2009)

Now the source data is ready on which we can apply Multicast transformation and move to multiple destinations. PFB the steps to be followed.

- Open a new project and drag a Data Flow task from toolbox in Control Flow.

- Edit the Data Flow task by double clicking the object or by selecting EDIT button on Right click on the object. Make sure the Data Flow Page is opened as shown below.

- Select OLE DB data source from data flow sources and drag and drop it in the data flow.

- Double click on the OLE DB data source to open a new window where we can set the properties of the connection.

- Select the connection manager and click on new button to set the connection string as shown below.

- Set the connection to the database by providing the Server name,database name and authentication details if required.

- After the connection is set, select Data Access mode as “Table or View” as shown below and then select the table which we created just now.

- Drag and drop MultiCast Transformation into the Data Flow Pane and provide connection between OLE DB Source and Multicast Transformation as shown below.

- Now from Multicast we can take many outputs and to demostrate the same drag and drop OLE DB Destination and give a connection between MultiCast transformation and OLE DB destination then EDIT the destination transformation to provide the connection details to ONE of the FIVE tables created with same structure.

- Check the mappings and click OK.

- Like above, we can create N number of destinations and for this demo purpose I created 5 similar destinations pointing to different tables we created as shown below.

- In the above pic, you can see that Many outputs are coming from one Multicast transformation and the same is pointed to different OLE DB destinations. Now the package is all set to go. Trigger it and wait till all the items turn GREEN.

That is it !! you can see Multicast taking one input of 11 rows and converting it to many copies and sending to different destinations.

This is how you can configure and use Multicast transformation. You can give output of Multicast to another transformation too before passing it to destination.

Difference between Multicast Transformation and Conditional Split transformation

Multicast Transformation generates exact copies of the source data, it means each recipient will have same number of records as the source whereas the Conditional Split Transformation divides the source data on the basis of defined conditions and if no rows match with this defined conditions those rows are put on default output.

In a data warehousing scenario, it's not rare to replicate data of a source table to multiple destination tables, sometimes it's even required to distribute data of a source table to two or more tables depending on some condition. For example splitting data based on location. So how we can achieve this with SSIS?

SSIS provides several built-in transformation tasks to achieve these kinds of goals and in this tip I am going to discuss two of them:

- Multicast Transformation and

- Conditional Split Transformation and how they differ from each other.

Multicast Transformation

Sometimes it's required to have multiple logical copies of source data of a single source table either for applying different transformation logic on each set or having each dataset for separate consumers, Multicast transformation helps to do this.

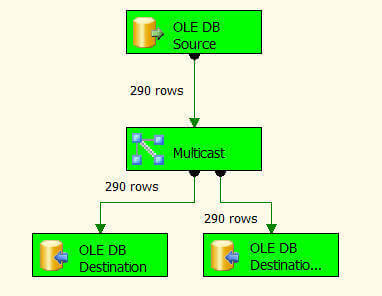

In nutshell, a Multicast transformation is used to create/distribute exact copies of the source dataset to one or more destination datasets. In this example I am going to distribute data from [HumanResources].[Employee] table to [HumanResources].[Employee1] and [HumanResources].[Employee2] tables, please note that the multicast transformation distributes the whole set of data to all outputs, it means if the [HumanResources].[Employee] table has 290 records then both [HumanResources].[Employee1] and [HumanResources].[Employee2] tables will have 290 records each as well.

Launch SQL Server Business Intelligence Development Studio and create a new project.

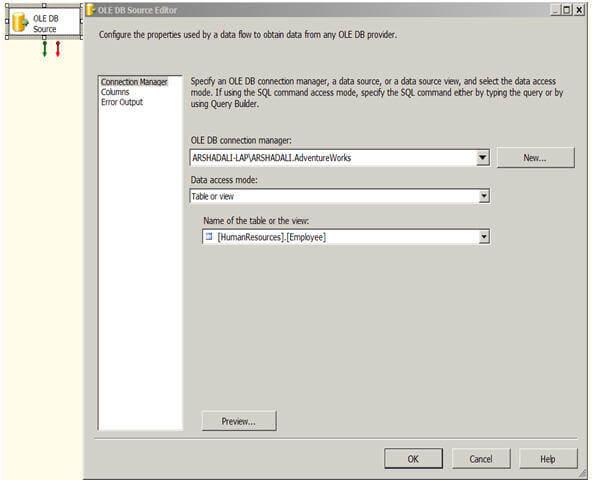

Let's start with a "Data Flow Task". Double click on it to open the Dataflow Pane and then drag an OLE DB Source on the working area and configure as shown below.

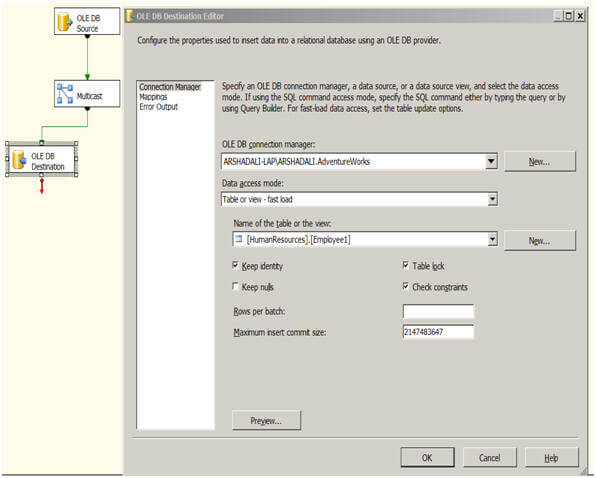

Then drag a Multicast Transformation and OLE DB Destination from the Toolbox as shown below and connect the tasks using the green arrow of OLE DB Source to the Multicast and then from the Multicast to the OLE DB Destination as shown below. Next right click on the OLE DB Destination and specify the destination connection manager and destination table as shown below. Please check the "Keep Identity" property on the OLE DB Destination editor to preserve the identity value coming from the source table we are using in this example.

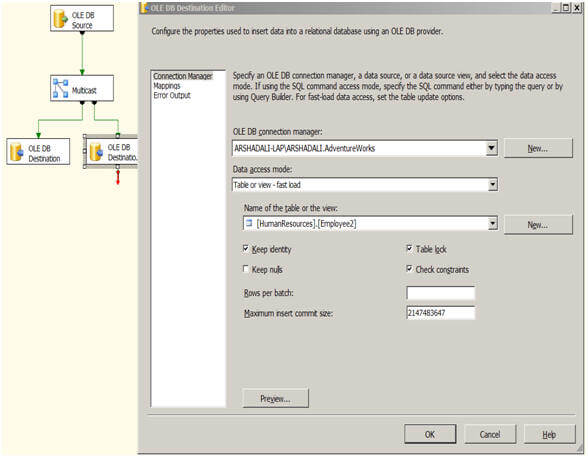

Next drag another OLE DB Destination from the Toolbox, drag another green arrow from the Multicast to the new OLE DB Destination as shown below. Next right click on the second OLE DB Destination and specify the destination connection manager and destination table. Please check the "Keep Identity" property for this one as well.

Once you are done with all these configuration, just hit F5 or click on the Play icon on the IDE, then you can see the execution of the package.

Please note the below image, from the source there are 290 records and both destination tables have 290 records written as well as they should.

Multicast transformation was introduced in SSIS 2005 and further enhanced and optimized in SSIS 2008 to use multithreading while writing data to multiple destination refer to this

article.

Conditional Split Transformation

Conditional Split Transformation is used to categorize the incoming source data into multiple categories as per defined conditions. What I mean here is, very much like the SWITCH CASE statement of any programming language, the conditional split transformation tests each incoming row on those defined conditions and wherever it matches with any of them it directs the row to that destination.

For example, consider you have a customer table, you want data of this table to be categorized on the basis of Age into Juniors, Adults and Seniors, another example, which I will be demonstrating in this tip, is if you have an employee table and you want data to be divided into Male and Female based on the value in the Gender column.

Conditional split also provides a default output in the case that the incoming row does not match any of the defined conditions, the row goes to the default output. This default output is normally used to move erroneous rows to a separate table.

Let's create another package and drag a Data Flow Task. Double click on it to open the Data Flow pane, now drag an OLE DB Source on the working area and configure it like this.

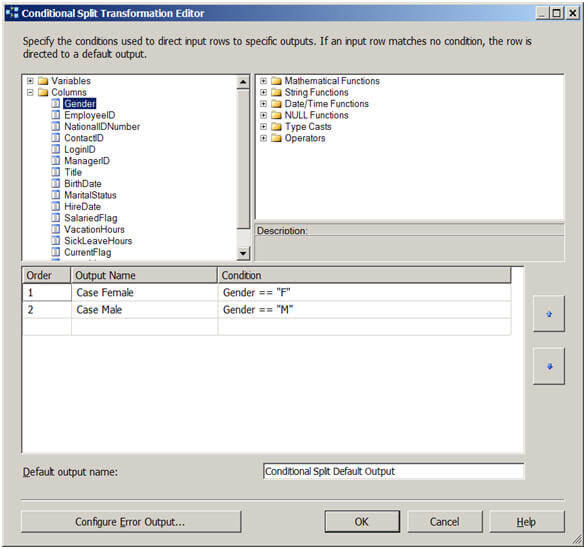

Then drag a Conditional Split Transformation from the Toolbox, drag the tiny green arrow of the OLE DB Source to the Conditional Split Transformation and configure it like this.

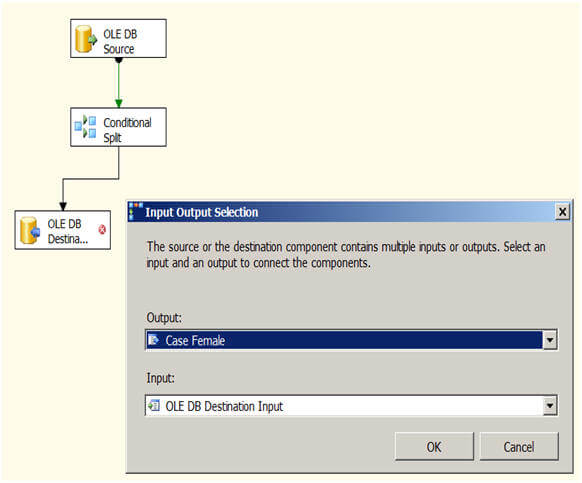

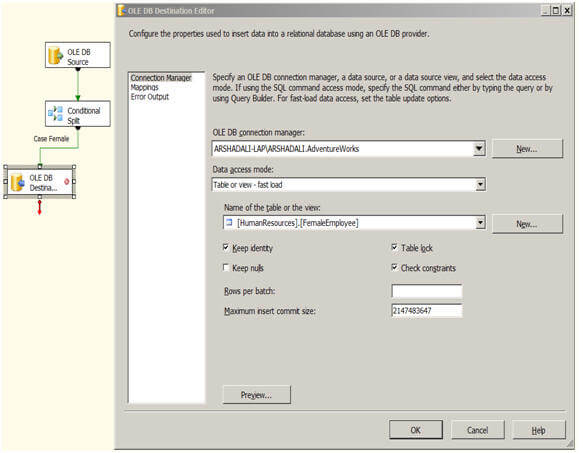

Next drag an OLE DB Destination and then drag the tiny green arrow from the Conditional Split Transformation to the OLE DB Destination. At this point it will ask you the condition to use which you just setup in the previous step. For the first one I selected "Case Female" and then I setup my OLE DB Destination as shown below. Once you are done with this OLE DB Destination configuration for Female, you need to repeat the same process for another OLE DB Destination configuration for Male.

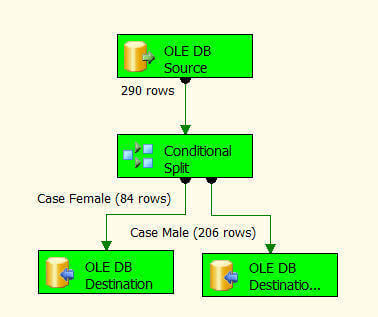

After this has been setup you can execute the package and it should look like the below image. As you can see out of the 290 source records 84 are related to female and moved to the FemaleEmployee table and 206 records are related to male and hence moved to the MaleEmployee table.

OLEDBCommand Transformation in SSIS

SSIS - How To Use OLE DB Command Transformation [ Delete Rows in Data Flow Task]

Scenario:

We have a SQL table which contains our data. Every day we get a text file from our users and they want to delete records from SQL table those match with the text file records those they have provided. The records in text file are less than 50 all the time and we are not allowed to create any staging table for this new process.

Solution:

If we could load the data into a staging table and then write Delete statement by joining two tables that would be better solution(set based queries). As we do not have option to create table and we have to handle everything in Data Flow task. We will be using OLE DB Command Transformation to do the job for us. OLE DB Command Transformation will perform row by row operation but in our case it will be OK as number of rows are always going to be less than 50. If you have a lot of deletes/updates, insert that data into some staging table and use set base queries in Execute SQL task to do the job.

Here is our solution for deleting few records on daily basis from SQL Table by matching records from text file.

Step 1:

Create a table with data by using below Query

CREATE TABLE [dbo].[DestinationTable](

[CountryName] [varchar](50) NULL,

[SalePersonName] [varchar](50) NULL

)

GO

insert into [dbo].[DestinationTable]

Select *

FROM (

SELECT N'uSA' AS [countryname], N'aamir shahzad' AS [salepersonname] UNION ALL

SELECT N'Italy' AS [countryname], N'andy' AS [salepersonname] UNION ALL

SELECT N'UsA' AS [countryname], N'Mike' AS [salepersonname] UNION ALL

SELECT N'brazil' AS [countryname], N'Sara' AS [salepersonname] UNION ALL

SELECT N'INdia' AS [countryname], N'Neha' AS [salepersonname] UNION ALL

SELECT N'Brazil' AS [countryname], N'Anna' AS [salepersonname] UNION ALL

SELECT N'Mexico' AS [countryname], N'Anthony' AS [salepersonname] ) t;

Step 2:

Create SSIS Package. After create SSIS Package, Create Flat File Connection and use below data in text file

CountryName,SalePersonName,SaleAmount

USA,aamir shahzad

Italy,andy

Step 3:

Bring OLE DB Command Transformation to Data Flow pane and connect your Flat File Source to it. After that do configure as shown by blow snapshots.

Choose the OLE DB Connection which is point to Database which has Destination table

Write the query as shown below.

Delete from dbo.DestinationTable where countryName=?

AND SalePErsonName=?

Map the input columns to the parameters as shown below, Remember our query we have provided CountryName first in query so we have to map to param_0 and then SalePersonName to param_1

Final Output:

As we can see in the snapshot, before running a package we had 7 records. After running package two records were deleted and only 5 records left in destination table.

SSIS- How to Use Row Count Transformation [Audit Information]

Scenario:

Let’s say we receive flat file from our client that we need to load into our SQL Server table. Beside loading the data from flat file into our destination table we also want to track how many records loaded from flat file. To keep track of Records Inserted we can create Audit table.

Solution:

As we have to keep track for number of records loaded, we need to create a table where we can insert this information. Let’s create a table with three columns

CREATE TABLE dbo.PkgAudit

(

PkgAuditID INT IDENTITY(1, 1),

PackageName VARCHAR(100),

LoadTime DATETIME DEFAULT Getdate(),

NumberofRecords INT

)

Step 1:

Create Connection Manager for your flat file. I used below records in flat file

CountryName,SalePersonName,SaleAmount

uSA,aamir shahzad,100

Italy,andy,200

UsA,Mike,500

brazil,Sara,1000

INdia,Neha,200

Brazil,Barbra,200

Mexico,Anthony,500

Step 2:

Create SSIS variable called RecordsInserted as shown below

Step 3:

Place Row Count Transformation to Data Flow Pane and connect Flat File Source to Row Count Transformation. Double click on Row Count Transformation and choose RecordsInserted Variable as shown below

Step 4:

Use any destination such as OLE DB Destination, Flat File where you want to insert data from Source. In our case I used Multicast for testing purpose as can be seen below

Step 4:

When we execute our package the rows are inserted into destination by passing Row Count Transformation. All the count is saved in the variable. Our next goal is to save this information to our Audit Table for record.

In Control Flow Pane , Bring Execute SQL Task and Configure as shown below

Map the User Variable (RecordsInserted) and System Variable( PackageName) to Insert statement as shown below

Final Output

Let's run our package and see if information is recorded in our Audit table. As you can see below 7 records were loading from source file to our destination. The same Audit table can be enhanced by adding more columns such as records update, record deleted , records rejected and save all these stats while execution of package in different variables and at the end insert into Audit Table.

- The Character Map transformation enables us to modify the contents of character-based columns

- Modified column can be created as a new column or can be replaced with original one

- The following character mappings are available:

--> Lowercase : changes all characters to lowercase

--> Uppercase : changes all characters to uppercase

--> Byte reversal : reverses the byte order of each character

--> Hiragana : maps Katakana characters to Hiragana characters

--> Katakana : maps Hiragana characters to Katakana characters

--> Half width : changes double-byte characters to single-byte characters

--> Full width : changes single-byte characters to double-byte characters

--> Linguistic casing : applies linguistic casing rules instead of system casing rules

--> Simplified Chinese : maps traditional Chinese to simplified Chinese

--> Traditional Chinese : maps simplified Chinese to traditional Chinese

EXAMPLE

Pre-requisite

Following script has to be created in DB

CREATE TABLE CharacterMapDemoSource (CID INT, LowerCaseVARCHAR(50), UpperCase VARCHAR(50), Byte NVARCHAR(50))

Go

INSERT INTO CharacterMapDemoSource (CID, LowerCase, UpperCase,Byte)

VALUES (1,'abc','ABC','demo'),

(2,'abC','AbC',N'搀攀洀漀')

Go

CREATE TABLE CharacterMapDemoDestination (CID INT, LowerToUpperVARCHAR(50), UpperToLower VARCHAR(50), ByteReversal NVARCHAR(50))

Go

Steps

1. Add Data Flow Task in Control Flow

2. Drag source in DFT and it should point to CharacterMapDemoSource Table.

3. Drag Character Map Transformation and do following settings.

4. We can add new column or we can replace existing one with new Output Alias

5. For each column, we can define different operation

6. Drag Destination and connect it with Character map

7. Records will look something like this.

8. ByteReversal operation basically goes by the byte of a string and reverses all the bytes.

- The Audit transformation lets us add columns that contain information about the package execution to the data flow.

- These audit columns can be stored in the data destination and used to determine when the package was run, where it was run, and so forth

- The following information can be placed in audit columns:

--> Execution instance GUID (a globally unique identifier for a given execution of the package)

--> Execution start time

--> Machine name

--> Package ID (a GUID for the package)

--> Package name

--> Task ID (a GUID for the data flow task)

--> Task name (the name of the data flow task)

--> User name

--> Version ID

EXAMPLE

Pre-requisite

Following script has to be executed in DB

CREATE TABLE AggregateDemo (ADID INT IDENTITY(1,1), StudentID INT,Subject varchar(10), Mark int)

GO

insert into AggregateDemo (StudentID,Subject,Mark)

values (1,'Maths',100),

(1,'Science',100),

(1,'English',90),

(2,'Maths',99),

(2,'Science',99),

(2,'English',95),

(3,'Maths',95),

(3,'Science',100),

(3,'English',98)

GO

CREATE TABLE AuditDemo (ADID INT, StudentID INT, Subjectvarchar(10), Mark int, ExecutionStartTime DATETIME, UserNameNVARCHAR(50), MachineName NVARCHAR(50))

GO

Steps

1. Add Data Flow Task in Control Flow

2. Drag source in DFT and it should point to AggregateDemo

3. Add Audit Transformation and connect it with Source

4. Add Destination and map columns

5. After execution of the package, records will look like this.

By default whenever package fails, error will be logged in Event viewer.

But still, we can maintain the complete execution history in customized way.

In Text File Log provider, you have specify text file. And it will start logging in that text file. It will append the text at every time.

In SQL Server Log provider, it will create a table called “ssislog”. And it will start inserting records.

Mainly, we are intersted in particular error. So we can do following settings.

Ssislog table will have records like this.

SSIS For Each Loop Container 2012:

This article demonstrates how to automate archival of files by using an SSIS job with a For Each Container that will use a File Enumerator and a File System Task to interate through files on a Working Directory and copy them to a uniquely named Archive directory nightly as a scheduled job or manually, depending on the need of the

company or party using it. The Archive Name convention uses SSIS Syntax to create a directory with a 2nd File System Task with a folder named using an Expression in a

Variable name for a new Folder for the files to be

copied into.

Create the Working Folder with Files

Create the folder with the working files that get modified on a daily basis that you need to archive. I used

C:\WorkingFiles\ as my folder.

Create Variables for the folder and the files to copy

Right click in the Control area and select variables. Create 2 variables - one for the files to copy and one for the

folder to create (based on the date) and the one to copy to. We will be creating an Expression to get the current

Date and convert it into a String for a new file folder name for each Archived file.

Expression for the new folder name

Syntax for the new folder name is a little tricky for SSIS. I need a string name for my new folder each night, so I

need to do a data conversion\cast with a DT_WSTR cast and parse the current date. I will use the RIGHT syntax to

parse the date into my new File Folder to store the files. The DT_WSTR string cast allows the Date Time variable

to be appended to the folder path as a unique location for backups for that day..

Add a new Foreach Loop Container to the Control Flow

Drag and drop a Foreach Loop Container into the Control Flow area of the Package. Right click on it to Edit the

details.

Edit the Foreach Loop Properties

Click on Collection, and make sure the Enumerator property is set to Foreac File Enumerator.

Click on the Variable Mappings in the left hand box and select the variable User::strFileName from the drop down.

Leave the 0 Index default. Click OK to finish.

Add and Configure a File System Task for the Foreach Loop Container

Drag and drop a File System Task directly onto the Foreach Loop Container. Right click on the task and select

Edit.

Make sure the the Operation is set to Copy File. Click in the drop downs for IsDestinationPathVariable and then

IsSourcePathVariable and change each one to True. Select the DestinationVariable and select the

User::strFolderName as the location for the files to go. Select the SourceVariable as the User::strFileName

variable. Click OK to finish once completed.

File System Task to Create Folder

Add one more File System Task to the Control Flow section of the Package to create the directory prior to the

copy task in the Foreach Loop. Right click on it and select Edit.

Select the Operation as Create directory from the drop down. Select IsSourcePathVariable = True and select the

User::strFolderName as the SourceVariable. This task will create the archive folder with the proper name (the

date). The folder must be created prior to the execution of the Foreach Loop or an error will be thrown - the loop

does not have the ability to test for the existence of the destination location prior to executing the Copy File task.

Execute The Foreach Loop Container Package

Click on the Green Arrow in the Toolbar or F5 to start Debugging. Notice that the Green Check marks indicate that

the steps have executed as expected.